How RSAs Killed the ETA Star

The deprecation of ETAs are a continuation of a Google strategy to automate the paid search customer journey using NLP and Machine Learning technology. As innovations in AI advance, search engines are leveraging these technologies to enhance user experience by understanding the relationships between words in user queries and advertiser content in order to generate the best possible customer journey for every query.

In order to generate ad revenue, a search engine’s core service has to be valuable enough for end users to visit in the first place. That is why it only makes sense that a search engine's primary goal is to serve the end user, helping them to quickly find exactly what they want, providing the most relevant results up front, and ensuring the highest likelihood of satisfaction from the user during their post-click experience. From this point of view, it is important to keep tabs on all changes that search engines, particularly Google, are making.

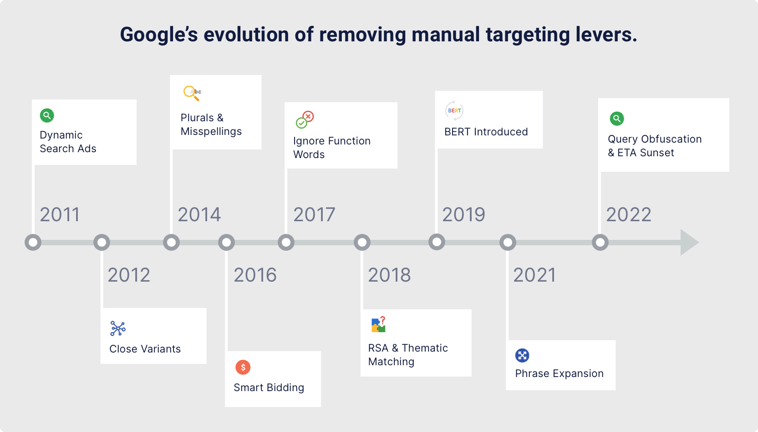

Google has a clear track record for removing manual levers from advertisers and rolling out more automation throughout the PPC chain driven by advances in Natural Language Processing and Machine Learning. By increasing the semantic relationship between queries and ads dynamically, search engines generate more revenue from higher CPCs while delivering a better user experience at the same time.

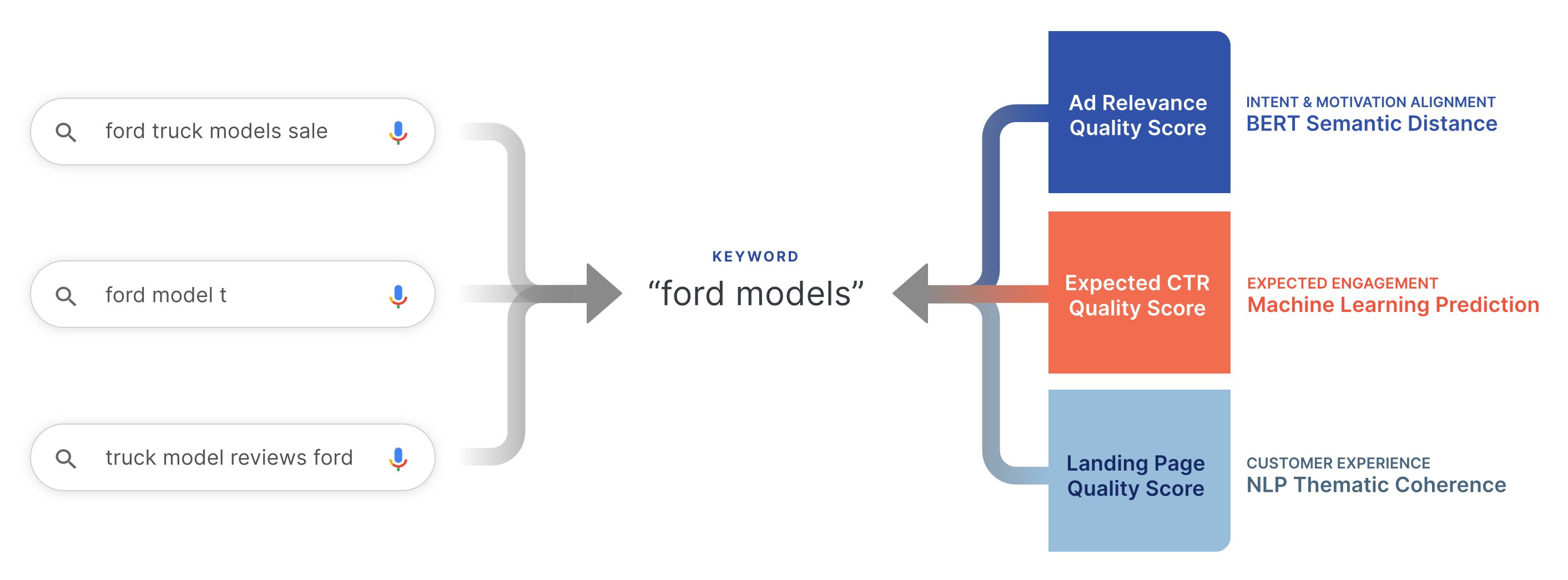

In 2012, Google first rolled out “close variants,” blurring the meaning of an “EXACT” match - currently defined as “Ads may show on searches that are the same meaning as your keyword.” What is relevant now is not the exact words, but rather the meaning behind the search and how tightly that aligns with all the possible customer journey options that could be presented in results. To understand what a user means, search engines have extensive historical and real-time contextual data about the user. Data such as browsing history, or device data like geo-location, browser and model, as well as search history. All of this rich, query-side data is fed into advanced NLP models to categorize what the user wants to do and why.

The what and why behind the query can be defined as: 1) Intent, and 2) Motivation. Four common intent categories referenced in the SEM channel are Transactional, Informational, Navigational, and Commercial. These explain what a user wants to do - “find a truck sale” or “compare truck reviews,” for example. Motivations explain an individual consumer’s value system, what is important to them. For example, whether I am looking to buy or compare trucks the safety features and ratings are likely to be highly motivating factors.

So, with the massive amount of data search engines possess on the query side of the equation, how can a search engine objectively score the relationship between the meaning of a search query and a basic keyword all on its own?

The answer is that it is not possible - just using the keyword. The rich data set needed to understand what an advertiser means with their keyword comes from the content of ads and landing pages, in addition to learning from historical data from similar user queries. All of this linguistic data has to be converted into mathematical terms for use in equations in order to understand the meaning of a query and its fitness for every possible pathway that could be presented from ad to landing page. To do this with Responsive Search Ads, not only is Google analyzing ad and landing page content; it is now also computing all the variations possible of every ad part in real-time in order to determine and deliver the version that best fits the meaning of the user query.

Ref: https://www.blog.google/products/ads/dynamic-search-ads-are-now-more/

Ref: https://www.blog.google/products/ads/dynamic-search-ads-are-now-more/

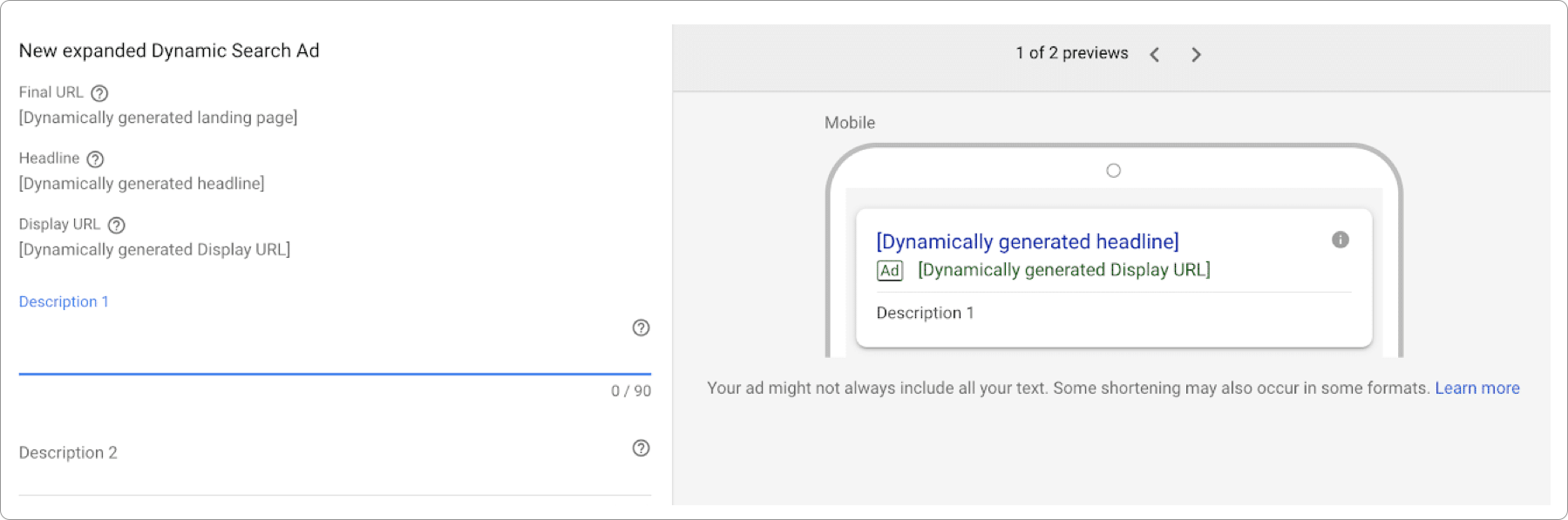

Despite all of these advancements by Google in Natural Language Processing and Machine Learning algorithms, the use of DSAs still account for only a small percentage of Google traffic. Why is that? Because, although Google has finally tuned its query to ad matching algorithms, it has not come far enough with Natural Language Generation to create ad copy, descriptions in particular. Part of the reason is the unpredictable nature of content available on landing page URLs and potential sparsity leading to imperfect, AI-generated ad copy. But even if that problem is solved, totally automated ad copy faces two issues - 1) definition of the target audience, and 2) human creativity and empathy.

Creating compelling ad copy at scale that addresses intents and motivations requires human knowledge of your target audience, of your product or service, your industry and competitors, or seasonality and promotions. The way marketers communicate their target audience to Google today is based on the content of the ad - which defines the targeted topics, intent and motivations. When Google generates content simply from a product or service landing page, the content is not sufficient to determine what intents and motivations to target. Additionally, particularly for writing descriptions that speak to specific values, emotions and motivations, human creativity and empathy are still key to generating persuasive ad copy.